It was exactly 2 years ago (Nov,2022) that I had written about Software 2.0 (term coined by Andrej Karpathy) and I had argued that we should expect a rapid and meaningful shift from systems performing deterministic tasks (think ERP, RPA etc.) to learning, adaptive systems where the “source code comprises of a 1/neural net architecture 2/dataset that defines the desirable behavior in the form of weights and a learning process like RL”. Many of us in the industry were excited about how LLMs would help enterprises to re-imagine digital transformation dramatically.

As we end 2024 (and almost 2 years of the Generative AI frenzy!), it is becoming clear that the impact has been, well a little less spectacular. While we see every enterprise (literally!) feverishly experimenting with GenAI POCs, we only see isolated pockets of impact delivered from GenAI, and even fewer instances of the magical promise of large-scale transformation. And you can feel the sense of growing frustration from many enterprises.

As I reflect on this, here is my hypothesis: In the first wave of GenAI deployments, the infatuation with LLMs seems to have blinded us into narrow thinking. Before I get into this, it is useful to go back to put down canonical axioms (i.e. what good looks like) on which the human problem-solving capabilities have been built:

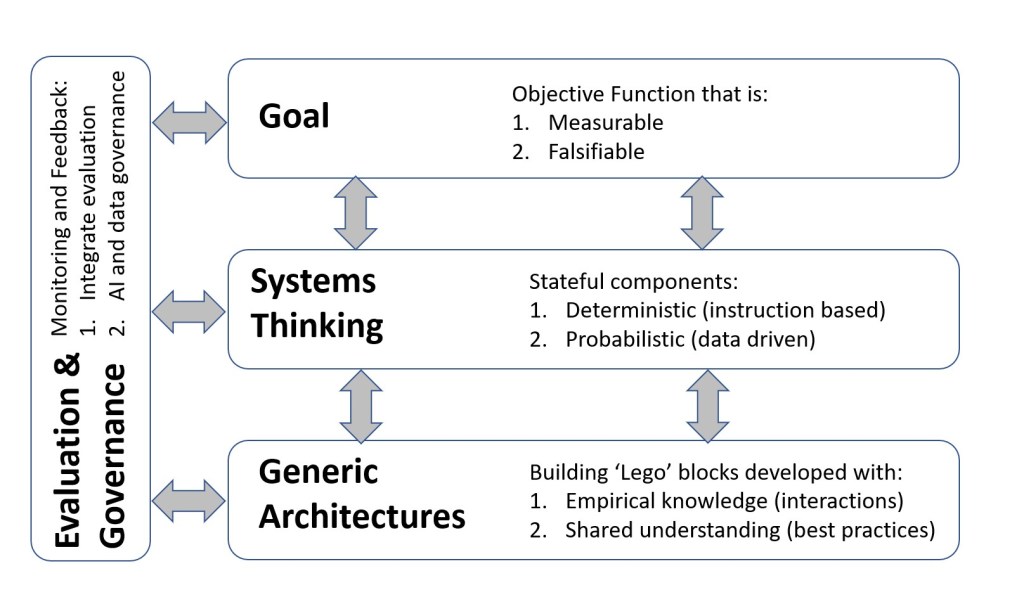

- Define a goal: start with a measurable and falsifiable outcome. Measurable so we can objectively and unambiguously determine the goal and falsifiable so we can objectively know if we have (or not) hit the goal.

- Systems thinking: Build stateful systems with a combination of deterministic (which always return the same output for a given input) and non-deterministic (probabilistic output based on input data) steps. Establish clear entry, exit criteria, and decision trees for next steps.

- Generic architectures: Start with a series of foundational blocks and iteratively build a library of ‘lego blocks’ through empirical knowledge (feedback from interacting with the environment) and shared understanding (normative practices built from collective experiences).

- Monitoring and evaluation: Monitor the effectiveness of actions and update the mental models on an ongoing basis. This is a combination of the efficacy (did we get the right outcome) and correctness (did we act according to a pre-defined ethical/legal framework.

To be sure, this is not exhaustive and focuses primarily on problem solving and definitely does not go into concepts like motivation, purpose et al. (a fascinating topic in itself, but for another day!)

Just looking at this list, it is apparent that most LLM implementations today are nowhere close to the same level of problem-solving capabilities. In fact, when I asked one of the customers that if an LLM based chatbot were an employee, where would it fit in the org structure, their reply: “An intern, at best. Even there, definitely not good enough to be paid minimum wage!”. Although this was in jest, it is not far from the truth.

Challenges with current LLM implementations

Looking at the current implementations, some of the dominant patterns that may point to why we are not yet seeing a whole lot of truly impactful business use-cases:

- Prompt engineering with a combination of prompt templates, Chain of Thought for reasoning, augmented by RAG (for enterprise context). However, this is still a linear process and in a majority of the cases, stateless (although that is changing).

- Manual adjustments that need the data scientists to keep going in and adjust prompt templates, guardrails etc. on an ongoing basis.

- Both of the above are model dependent – and in a world where models are changing at an astonishing pace, creates challenges when the data scientists are looking to switch to a ‘better performing model of the day’.

What next

I believe that we are nearing the end of the hype around Large Language Models, and while Agentic AI seems to be the next big buzzword, I do think we need to broaden our thinking and take a deeper look at integrated systems that combine the enormous potential of LLMs with other elements (e.g. structured data systems) to build flexible, performant systems. It is time to embrace the vision of Software2.0 and work towards making it a reality.

There are some fantastic resources to start thinking about this area:

- The paper that helped me starting thinking about this: The Shift from Models to Compound AI Systems

- Outstanding introductory lecture from Stanford: LLMs get the hype, but Compound Systems are the future of AI

- Databricks is probably one of the earliest off the blocks on this. Here’s their documentation

It is truly exciting to be in this space at this moment in time and if we get this right, we have a really good shot at using AI to truly solve some of the more complex, meaningful problems and deliver transformative business impact.

Leave a comment