The other day, I saw a t-shirt slogan – ‘Update your priors!’ These nerdy t-shirt slogans are not uncommon in the Silicon Valley. This one caught my eye – to me, it has never been more relevant given the current swirl of data and analysis around Covid-19, now that epidemiologists and statisticians are in a race to better understand what works and what doesn’t – and the implications are enormous as governments all over the world are struggling to navigate through policy choices to respond to the virus.

First, a quick recap on how we operate as individuals. For the most part, we start with a set of beliefs, which are typically fuzzy and not backed by a whole lot of evidence. And then, as and when evidence comes in, we use this as a trigger for updating the belief system, and here is the most important part – this process doesn’t stop: as we keep getting evidence, we iteratively keep moving towards greater certainty and in the process, generate a coherent body of knowledge. Of course, we are not always that structured – often, we operate in extremes: from operating with a fixed set of beliefs that we find hard to modify in the face of evidence to the contrary to flipping with every new piece of evidence and everything in between (there is a list of cognitive biases that keeps growing all the time)

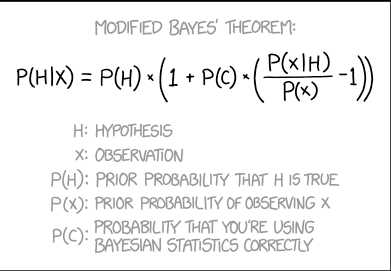

Bayesian reasoning is in fact, very similar to this and provides a structured way of approaching problems with two major ideas:

- The focus should be less about finding the absolute answer and more about establishing a reasonable range of possibilities (e.g. error function). This recognizes the inherent volatility and variability that exists around us

- Based on incremental evidence, update the solution set (e.g. narrowing or broadening your error functions; or in some cases, even overhauling the inputs). This has to be done iteratively, at certain pre-determined intervals or even better, every time you have updated information

This is most evident in epidemiology studies on Covid-19 and the breathtaking speed with which data is coming in: it seems like we are learning that the infection rates are dependent on a wide range of random factors: social networks (behavioral); culture and socioeconomic class (demographic); weather, air-conditioning and all sorts of unknowns. The important thing is that the researchers are not sitting around and scratching their heads – instead, they are using the Bayesian inference tools to keep chipping away. One example: there is something called the reproduction number (R0) – defined as the the number of people likely to get infected by one case in a population where everyone is susceptible to infection. The standard method would be to assume a certain number (based on historical rates) and build the prediction model based on that number and use the confidence interval as a means to capture the efficacy of the reproduction number. The Bayesian approach differs in a fundamental way: begin by assuming that the reproduction number has various distributions (which could be inferred from responses to epidemics/pandemics in the past). In other words, ‘the priors’. And once again, ‘update the priors’ as more data rolls in. This is especially useful when trying to predict the impact of (riskier) tail events (e.g. superspreader events – as Florida discovered painfully in the last few weeks)

So what?

I have touched on this topic of Bayesian inferential thinking several times – and at times like these: if you are a more than casual reader interested in truly understanding the studies related to Covid-19 (and consequently, the policy decisions), you would do well to brush up on high-school probability.

And just as importantly, if you are tasked with making decisions in the current business environment that is shifting all the time, often dramatically, you should challenge your teams to adopt Bayesian analysis methods. And while you are at it, make sure you check with your analysts if and how they are doing when it comes to ‘Update your priors!’

Two of my previous posts on this topic:

https://thinklikesocrates.com/2020/05/04/bayes-what-is-the-big-deal/

https://thinklikesocrates.com/2020/05/11/bayes-meets-markov-let-the-chain-reaction-begin/

*Image source: https://www.explainxkcd.com/wiki/index.php/2059:_Modified_Bayes%27_Theorem

Leave a comment